Turning an ML model into AI: strategies, best practices, and nuances

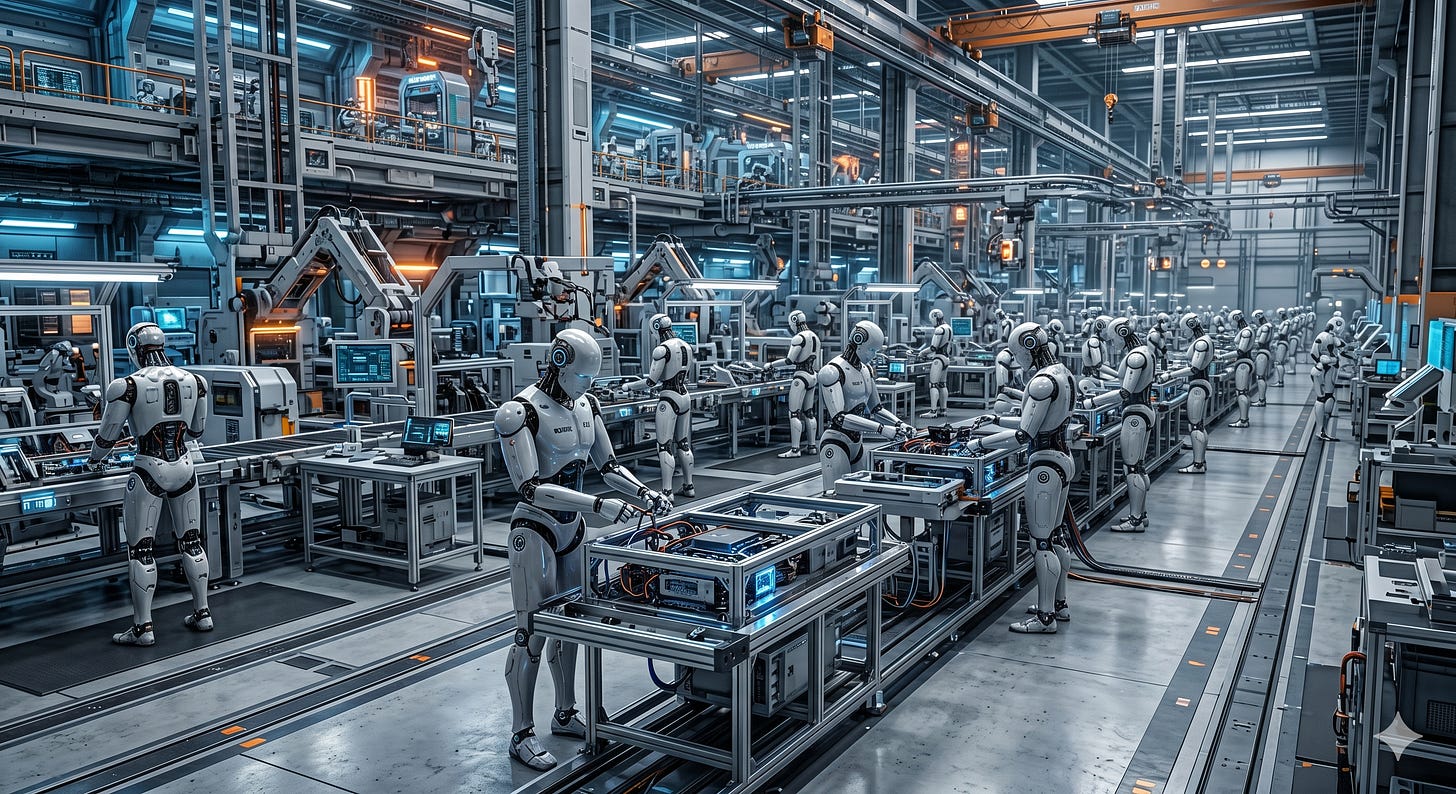

ML model isn't AI. It becomes AI when it's integrated in real, usable software that can use these models in a meaningful ways. Every AI engineer must know how to do this integration properly.

This article continues a series of articles that, together, form a detailed guide on how to become a successful AI engineer. In this series of articles, we cover both the fundamental skills if you are new to the field, and blind spots if you already are an AI engineer.

The goal of this multi-part guide is to teach you the skills that will help you become a professional that companies would be looking to hire in the emerging, lucrative, and in-demand field of AI engineering. The previous articles of the series can be found here:

Today, we will talk about one of the most fundamental competencies of an AI engineer, which is understanding what AI actually is.

There’s way too much confusion in this area. It’s not uncommon to refer to an LLM as AI. Or, on the contrary, say that LLM is not a “proper AI” because “it hasn’t reached the level of AGI yet”.

But both of these perspectives are wrong. Firstly, “AI” doesn’t necessarily mean “AGI”. “Artificial intelligence” is a very wide term that broadly means “a system that can make complex, intelligent decisions”. It doesn’t mean that it’s sentient, sapient, conscious, or self-aware. That’s what AGI is: Artificial General Intelligence.

On the other hand, LLM (or any other ML model, for that matter) is not AI. It only becomes “AI” when it can actually be used, i.e., when it’s embedded in an executable software product that can use the model in a meaningful way. For example, while ChatGPT is AI, the GPT model it relies on isn't, on its own. While Claude is AI, the Opus model it relies on isn’t.

This is why any AI engineer will laugh at a sensational newspaper headline talking about a new OpenAI or Anthropic model “gaining consciousness” or “finding security vulnerabilities on its own”. A model will never do anything on its own. It only does stuff when it’s triggered to do stuff. Yes, there’s a concept of “emerging skills”, where the model does something that it wasn’t expected to have learned. However, it will never do anything unless a human or a software system asks it to.

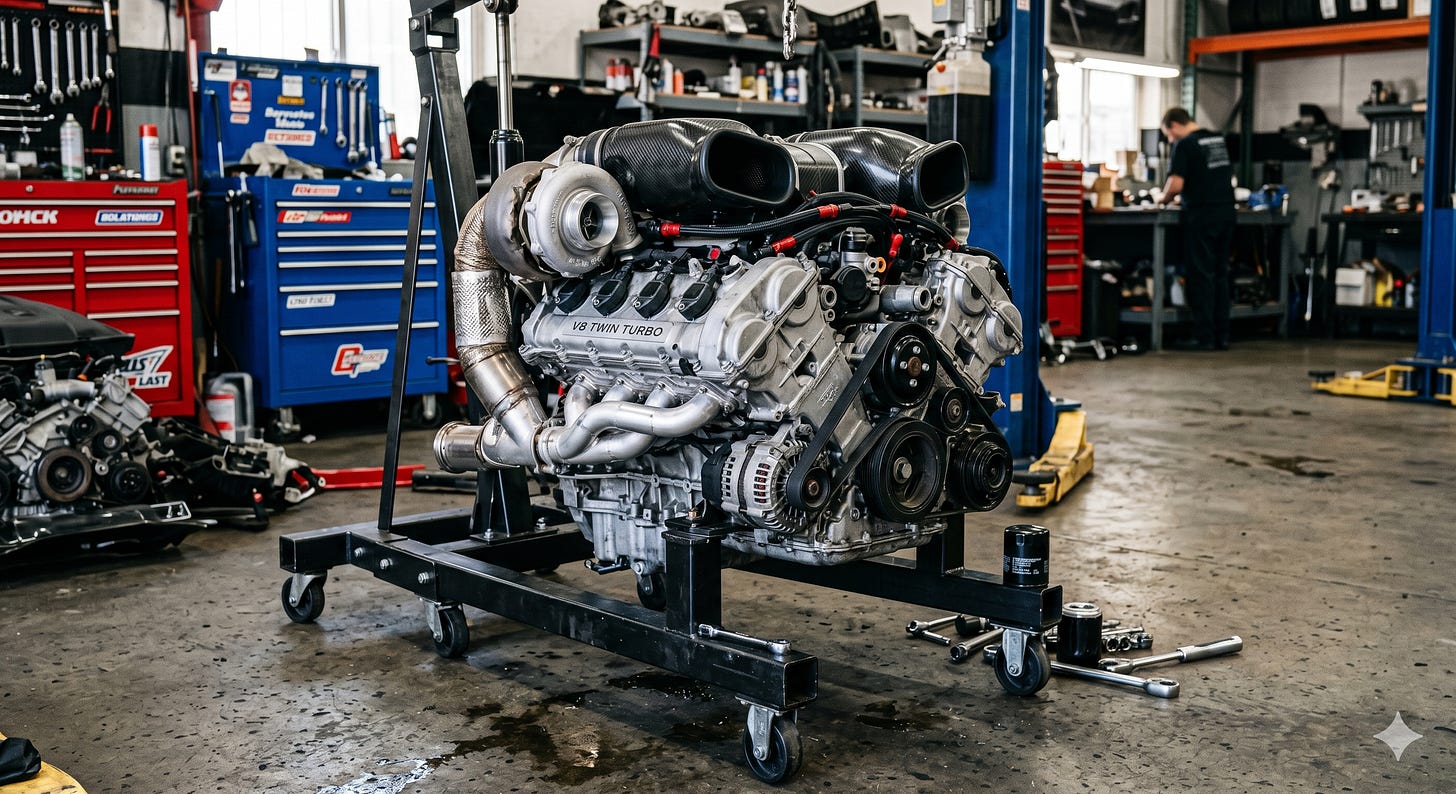

Think of AI as a car. LLM is its engine. A car cannot operate without an engine. The engine is one of its critical components, without which its basic functionality is impossible. However, the engine on its own cannot do anything either. If you take it out of the car, it won’t go anywhere.

In this article, we will talk about the process of turning ML models into AI by integrating them with executable software. You will learn the basic principles of such integration, strategies for choosing the right model for the right task, best practices, and nuances you are likely to encounter while integrating models in real, commercial systems. We will answer the following questions:

How are ML models integrated into your codebase?

How to choose the right model for the right job?

Should you self-host the model or use an externally hosted one?

To make this knowledge stick, you will also build your own tech support bot by integrating ML models with code. It won’t be just a tutorial on how to add models to software. We will go through the process of architecting our application for ML model integration and strategically choosing the right models to add.

All these skills are important in real commercial projects. One of the reasons AI engineers are paid so well is that they aren’t just the people who know how to call the GPT API from code. No, it’s way more than that. AI engineers are the people who can engineer and architect AI-enabled software. And this is what you will learn.

How an ML model fits into a software

To understand how an ML model fits into software, we will first need to understand what a model is. The easiest way to describe it is as a complex data structure combined with a complex mathematical algorithm, either presented as a single file or as a distributed structure comprising many components.

Each model has its own API — the interface that allows it to accept inputs and produce outputs. This API may be directly compatible with a specific programming language, such as Python. Alternatively, if the interface of the model isn’t directly compatible with the programming language the application is written in, the model is accessed via a wrapper with the APIs written in that language. For example, in ML.NET, such a wrapper would be a class that implements the ITransformer interface.

The following diagram shows how an ML model fits into a software:

Keep reading with a 7-day free trial

Subscribe to AI Engineering with Fiodar to keep reading this post and get 7 days of free access to the full post archives.